On the occasion of the SC20 conference, Cerebra Systems, in collaborations with researchers at the National Energy Technology Laboratory (NETL), showed that its latest single wafer-scale Cerebras CS-1 could outperform one of the fastest supercomputers in the U.S. by more than 200 times.

The Cerebras CS-1 is the world’s first wafer-scale computer system. It is 26 inches tall, fits in a standard data center rack, and is powered by a single Cerebras Wafer Scale Engine (WSE) chip. It is the world’s largest chip, measuring 72 square inches (462 cm2) and the largest square that can be cut from a 300 mm wafer. All processing, memory, and core-to-core communication occur on the wafer. In total, there are 1.2 trillion transistors in an area of 72 square inches.

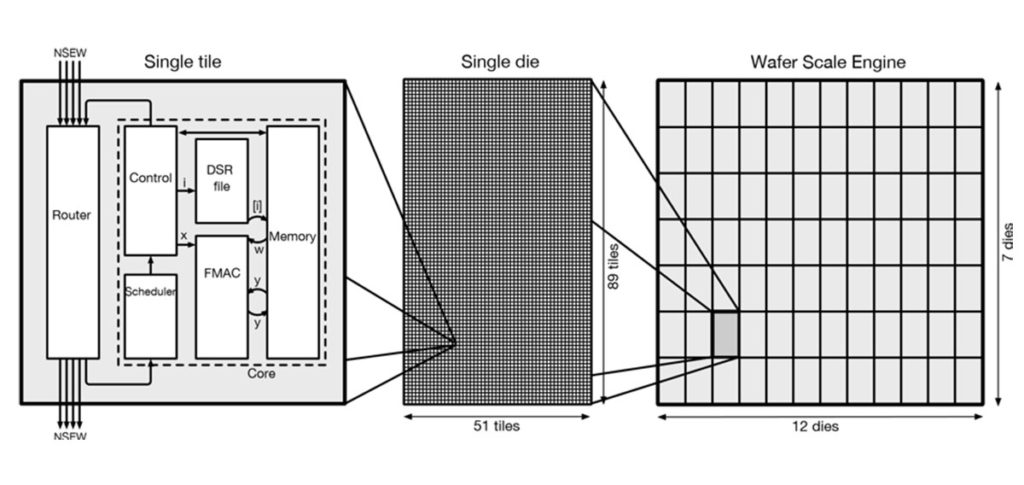

The wafer holds almost 400,000 individual processor cores, each with its private memory and a network router. The cores form a square mesh. Each router connects to the routers of the four nearest cores in the mesh. The cores share nothing; they communicate via messages sent through the mesh.

Cerebras CS-1 will be used especially for scientific research and science-related projects. The machine can solve a large, sparse, structured system of linear equations of the sort that arises in modeling physical phenomena – like fluid dynamics – using a finite-volume method on a regular three-dimensional mesh. Solving these equations is fundamental to such efforts as forecasting the weather; finding the best shape for an airplane’s wing; predicting the temperatures and the radiation levels in a nuclear power plant; modeling combustion in a coal-burning power plant; and making pictures of the layers of sedimentary rock in places likely to contain oil and gas.

To achieve such results, Cerebras says there are three factors that enable the computer‘s speed, including the CS-1’s memory performance, high bandwidth and low latency of the on-wafer communication fabric, and processor architecture optimized high-bandwidth computing.

In return, of course, you have a chip about 60 times the size of a large conventional chip like a CPU or GPU. It was built to provide a much-needed breakthrough in computer performance for deep learning.

The researchers used the CS-1 to do sparse linear algebra, typically used in computational physics and other scientific applications. Using the wafer, they achieved a performance more than 200 times faster than that of NETL’s Joule 2.0 supercomputer. NETL’s Joule is the 24th fastest supercomputer in the U.S. and 82nd fastest on a list of the world’s top 500 supercomputers. It uses Intel Xeon chips with 20 cores per chip for a total of 16,000 cores.