Benchmarks that allow us to observe the performance of the artificial intelligence systems – used to control robots – has some disadvantages. Its specialized hardware is very costly and many of the benchmark tests are limited to high-cost, industrial-quality robots.

To address this problem, the UC Berkeley researchers in collaboration with Google Brain have introduced Robotics Benchmarks for Learning with Low-Cost Robots (ROBEL). ROBEL is an open-source platform of cost-effective robots designed for reinforcement learning in the real world. It comes with benchmark tasks made for an AI system installed in an inexpensive learning-based robot.

ROBEL serves as a rapid experimentation platform, supporting a wide range of experimental needs and the development of new reinforcement learning and control methods.

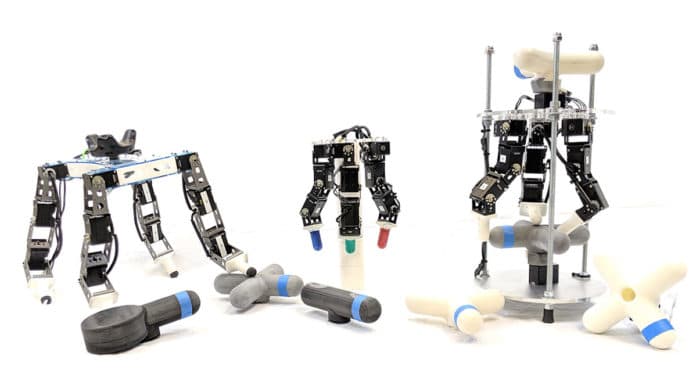

ROBEL introduces two robots D’Claw, a three-fingered hand robot that facilitates learning of dexterous manipulation tasks and D’Kitty, a four-legged robot that enables the learning of agile legged locomotion tasks. D’Claw is 9 degrees of freedom (9 DoF) and D’Kitty is 12 degrees of freedom (12 DoF). According to the researchers, inexpensive modular robots are easy to maintain and are robust enough to sustain on-hardware reinforcement learning from scratch.

The team has devised a set of tasks suitable for each platform, D’Claw, and D’Kitty, which can be used for benchmarking real-world robotic learning. These tasks feature dense and sparse task objectives and additionally introduce score metrics as hardware-safety. The ROBEL benchmark is made for carrying out tasks like Pose, Turn, and Screw for D’Claw and Stand, Orient, and Walk for D’Kitty.

ROBEL also supports a simulator for all tasks to facilitate algorithmic development and rapid prototyping, the team wrote in the blog post; you can read more details there.