Nuclear power provides approximately one-fifth of the U.S. electricity demand and nearly half of the country’s clean energy supply. Most of the nuclear reactors responsible for generating this electricity were constructed decades ago and are still operating quietly, safely, and efficiently.

Building new nuclear reactors that use advanced technologies and processes could increase the amount of carbon-free energy that the nuclear industry produces and help the U.S. achieve a net zero economy. However, the process of building a new reactor is time-consuming and requires extensive computer simulations.

Researchers at the U.S. Department of Energy’s Argonne National Laboratory are planning to utilize Aurora, the lab’s upcoming exascale supercomputer, to explore the internal workings of various nuclear reactor models.

The simulations are expected to provide an unparalleled level of detail, providing valuable insights that could potentially transform reactor design by enhancing the understanding of the complex heat flows within nuclear fuel rods. As a result, this could lead to significant cost savings while still ensuring safe electricity generation.

The lab currently relies on the Polaris supercomputer for its simulations. Polaris has a peak performance of 44 petaflops, which means it can complete 44 quadrillion calculations per second.

On the other hand, the Aurora system is expected to be even more powerful, with a capacity of over two exaflops. This would allow it to perform two quintillion calculations per second, which is 50 times more powerful than the current system. Once operational, it’s expected to lead the TOP500 list that ranks the most powerful computers in the world.

“What’s really new with Aurora are both the scale of the simulations we’re going to be able to do as well as the number of simulations,” said Argonne nuclear engineer Dillon Shaver. “In working on Polaris – Argonne’s current pre-exascale supercomputer – and Aurora, these very large-scale simulations are becoming commonplace for us, which is quite exciting.”

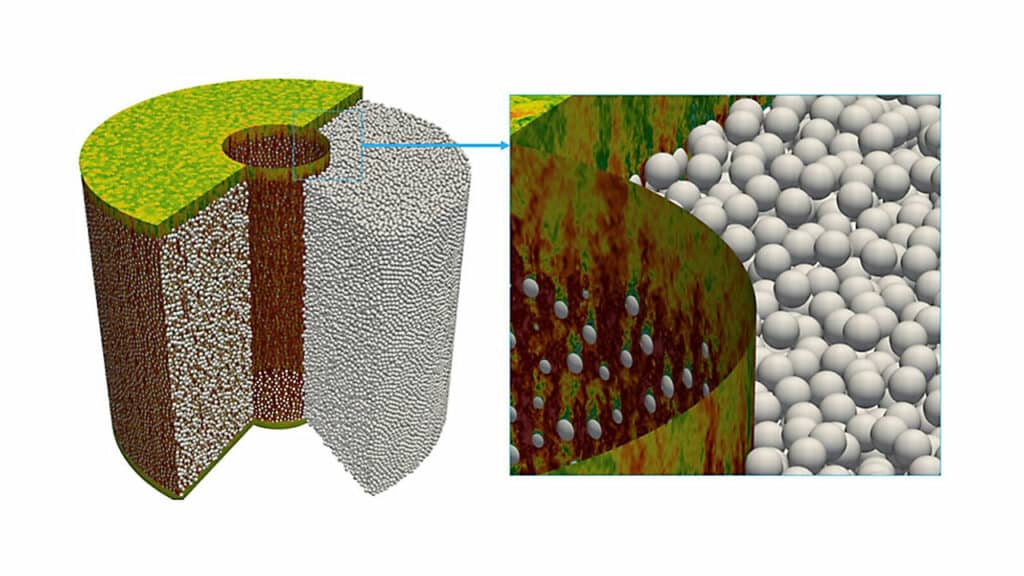

Shaver and his team are aiming to leverage the powerful computational ability of Aurora to address tens of millions of discrete elements with billions of unknowns in a simulation. They are working on looking at large sections of the nuclear reactor core with high fidelity (the amount of detail you can capture), which requires an exascale machine to compute all the physics on the finest length scales.

These simulations will ultimately provide companies who seek to build commercial reactors with a greater ability to validate and license their designs, Shaver explained. “Reactor vendors are looking to us to do these high-fidelity simulations in lieu of expensive experiments, with the hope that as we move to a new era in computing, they will be able to have data that strongly backs up their proposals,” he said in the press release.

By simulating the turbulence in the reactor, researchers can effectively model the reactor’s heat transfer properties. Increasing the turbulence helps transfer more heat, but the process also requires more energy.

The team will use the Multiphysics Object Oriented Simulation Environment (MOOSE) to make modeling and simulation more accessible to a wide range of scientists. MOOSE has revolutionized nuclear engineering predictive modeling, which allows nuclear fuels and materials scientists to develop numerous applications that predict the behavior of fuels and materials.

According to Shaver, the design of Aurora will allow scientists to run MOOSE and NekRS – a computational fluid dynamics solver – simultaneously, taking advantage of the computer’s mixed CPU/GPU nodes.

Being able to resolve all the little details in the flow in a reactor can make an enormous difference when it comes to designing either next-generation light water reactors or new reactor designs, such as those cooled by sodium or molten salt.