LiDAR – short for Light Detection and Ranging – is now a well-established range-finding technology that recognizes objects by projecting light onto them. The LiDAR sensor functions as an eye for autonomous vehicles by helping them identify the distance to surrounding objects and the speed or direction of the vehicle. The sensor must perceive the sides and rear as well as the front of the vehicle to detect unpredictable conditions on the road and respond.

However, according to researchers at the Pohang University of Science and Technology (POSTECH) in South Korea, it has been impossible to observe the front and rear of the vehicle simultaneously because a rotating LiDAR sensor was used. To overcome this issue, a POSTECH research team has developed a fixed LiDAR sensor that can recognize all directions simultaneously – has a 360° view.

The ultra-small, ultra-thin LiDAR device is only one-thousandth the thickness of a human hair strand. The research team succeeded in extending the viewing angle of the LiDAR sensor to 360° by modifying the design and periodically arranging the nanostructures that make up the metasurface.

It is possible to extract three-dimensional information about objects in 360° regions by scattering more than 10,000 dot arrays (light) from the metasurface to objects. The sensor then interprets the reflected or backscattered light via a camera to provide distance measurements.

“We have proved that we can control the propagation of light in all angles by developing a technology more advanced than the conventional metasurface devices,” said Professor Junsuk Rho, co-author of the research. “This will be an original technology that will enable an ultra-small and full-space 3D imaging sensor platform.”

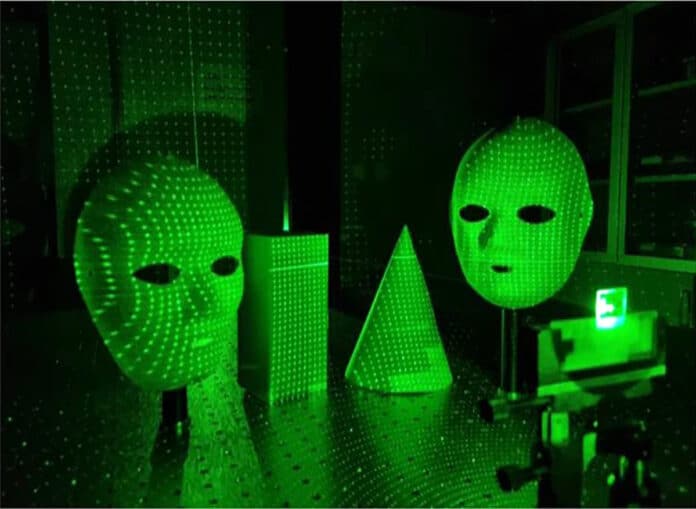

As a proof-of-concept, researchers place face masks within the range of 1 m with a wide viewing angle of up to 60° with respect to the optical axis and illuminate the high-density dot arrays generated from the proposed metasurfaces. The depth information of the 3D face masks is extracted from the stereo-matching algorithm using two cameras.

Furthermore, the team demonstrated a prototype of the metasurface-based depth sensor for compact and lightweight AR glasses using a nanoparticle-embedded-resin (nano-PER)-based scalable imprinting fabrication method, facilitating direct printing of metasurface to a curved surface of glasses. According to the researchers, such a metasurface-based structured light imaging platform can realize imaging of 3D objects over a full field of view with high-density dot arrays in an ergonomically and commercially viable form factor.

The technology allows cell phones, augmented and virtual reality (AR/VR) glasses, and unmanned robots to recognize the 3D information of the surrounding environment. By utilizing nanoimprint technology, it is easy to print the new device on various curved surfaces, such as glasses or flexible substrates.

Journal reference:

- Gyeongtae Kim, Yeseul Kim, Jooyeong Yun, Seong-Won Moon, Seokwoo Kim, Jaekyung Kim, Junkyeong Park, Trevon Badloe, Inki Kim, Junsuk Rho. Metasurface-driven full-space structured light for three-dimensional imaging. Nature Communications, 2022; DOI: 10.1038/s41467-022-32117-2