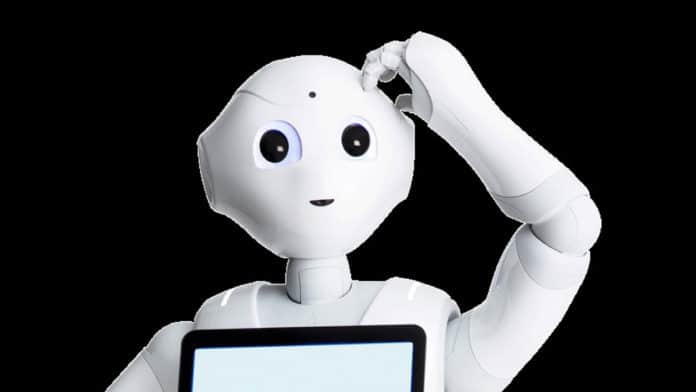

Italian researchers have programmed a humanoid robot named Pepper, made by SoftBank Robotics in Japan, to “thinks out loud” so that users can hear its thought process. Hearing a robot voice its decision-making process increases the transparency and trust between humans and machines.

Arianna Pipitone and Antonio Chella at the University of Palermo, Italy, built an ‘inner speech model’ based on a cognitive architecture that allowed the robot to speak aloud its inner decision-making process, just like humans when faced with a challenge or a dilemma. With the inner speech, users can hear its thought process and better understand the robot’s motivations and decisions.

“If you were able to hear what the robots are thinking, then the robot might be more trustworthy,” co-author Antonio Chella explained in a press release. “The robots will be easier to understand for laypeople, and you don’t need to be a technician or engineer. In a sense, we can communicate and collaborate with the robot better.“

In one experiment, the researchers asked Pepper to set a dinner table according to etiquette rules they had encoded into the robot. They found that, with the help of inner speech, Pepper is better at solving dilemmas triggered by confusing human instructions that went against protocol.

When a user asked Pepper to place the napkin at the wrong spot, Pepper started asking itself a series of self-directed questions and concluded that the user might be confused. To be sure, Pepper confirmed the user’s request, which led to further inner speech. “Ehm, this situation upsets me. I would never break the rules, but I can’t upset him, so I’m doing what he wants,” Pepper said to itself, placing the napkin at the requested spot – prioritizing the request despite the confusion.

The researchers compared Pepper’s performance with and without the speech function and found that the robot had a higher task-completion rate when engaging in self-dialogue.

Pipitone and Chella say their work provides a framework to further explore how self-dialogue can help robots focus, plan, and learn. With the potential for robots to become more common in the future, this type of programming could help people understand their abilities and limitations.