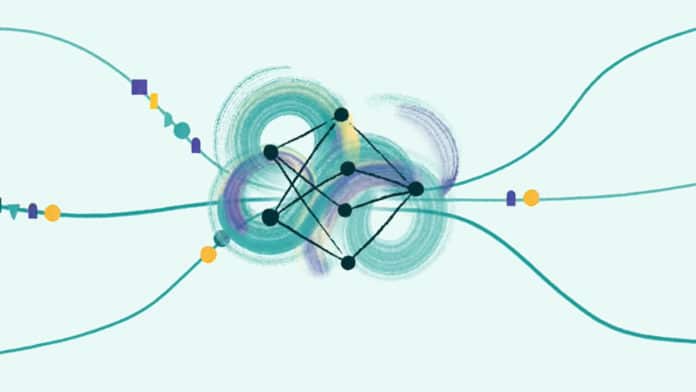

Unlike human memory, most neural networks typically process information indiscriminately. On a small scale, this is functional. But current AI mechanisms used to selectively focus on certain parts of their input struggle with ever-larger quantities of information, incurring unsustainable computational costs.

For this reason, Facebook researchers want to help future AIs to pay more attention to important data by assigning an expiration date. They announced the development of a novel method in deep learning, called Expire-Span, a first-of-its-kind operation that equips neural networks with the ability to forget at scale. It helps neural networks more efficiently sort and store the information most pertinent to their assigned tasks.

Expire-Span works by first predicting information that’s most relevant to the task at hand. Based on the context, Expire-Span then assigns an expiration date to each piece of information. When the date has passed, the information gradually expires from the AI system, Angela Fan and Sainbayar Sukhbaatar, research scientists at FAIR, explained in a Friday blog post.

In this way, information essential to its operation is retained longer, while irrelevant information expires more quickly, freeing up system memory space to focus on core tasks. Each time a new data set is added, the system will evaluate not only its relative importance but also re-evaluate the importance of the existing data points in relation to the new ones. This also helps AI learn to use the available memory more efficiently, which facilitates the scalability of the entire system.

The main challenge with forgetting in AI is that it’s a discrete operation. Like the 1s and 0s that make up the AI’s code, the system can either forget a piece of information or not – there is no in-between. Optimizing such discrete operations is really hard. Previous approaches to this problem often involved compressing the less useful data so that it would take up less space in memory. While this allows the model to extend to longer ranges in the past, compression yields blurry versions of memory.

“Expire-Span calculates the information’s expiration value for each hidden state each time a new piece of information is presented and determines how long that information is preserved as a memory,” Fan and Sukhbaatar explained. “This gradual decay of some information is key to keeping important information without blurring it. And the learnable mechanism allows the model to adjust the span size as needed. Expire-Span calculates a prediction based on context learned from data and influenced by its surrounding memories.”

Expire-Span helps AI systems gradually forget irrelevant information and continuously optimize such discrete operations in a highly efficient way.

While this is currently the early stages of research, “as the next step in our research toward more humanlike AI systems, we’re studying how to incorporate different types of memories into neural networks,” they added. In the future, the team hopes to bring AI even closer to humanlike memory with capabilities of learning much faster than current systems.